Machine Vision Basics for Precision Manufacturing

From Machine Vision systems to precision manufacturing workflows, modern factories rely on verified Technical Specifications and Industrial Standards to reduce defects and improve throughput. For buyers, engineers, and quality teams comparing technologies such as 3D Printing, Metal 3D Printers, Additive Manufacturing, Fiber Lasers, and Nanomaterials, understanding the basics of Machine Vision is essential—especially as Export Control, compliance, and performance benchmarking increasingly shape industrial decisions.

In precision manufacturing, machine vision is no longer a niche inspection add-on. It is a core production capability that supports dimensional verification, surface defect detection, robotic guidance, code reading, and process traceability across sectors ranging from electronics and automotive to medical devices, semiconductors, and advanced materials. For research teams, procurement managers, operators, and decision-makers, the practical question is not whether machine vision matters, but how to select, deploy, and govern it correctly.

This article explains the fundamentals of machine vision in a way that is useful for industrial evaluation and implementation. It focuses on system components, common inspection tasks, performance metrics, selection criteria, integration risk, and procurement checkpoints. It also connects machine vision with broader industrial concerns such as standards alignment, measurable ROI, and supply-chain readiness.

What Machine Vision Means in Precision Manufacturing

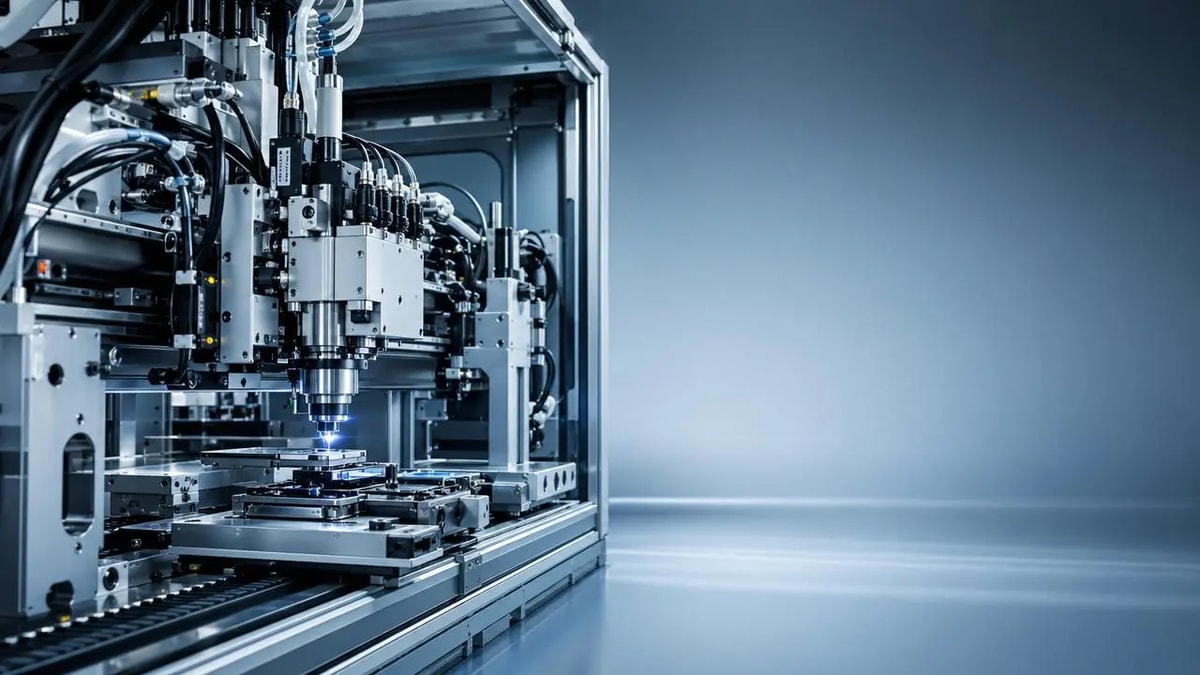

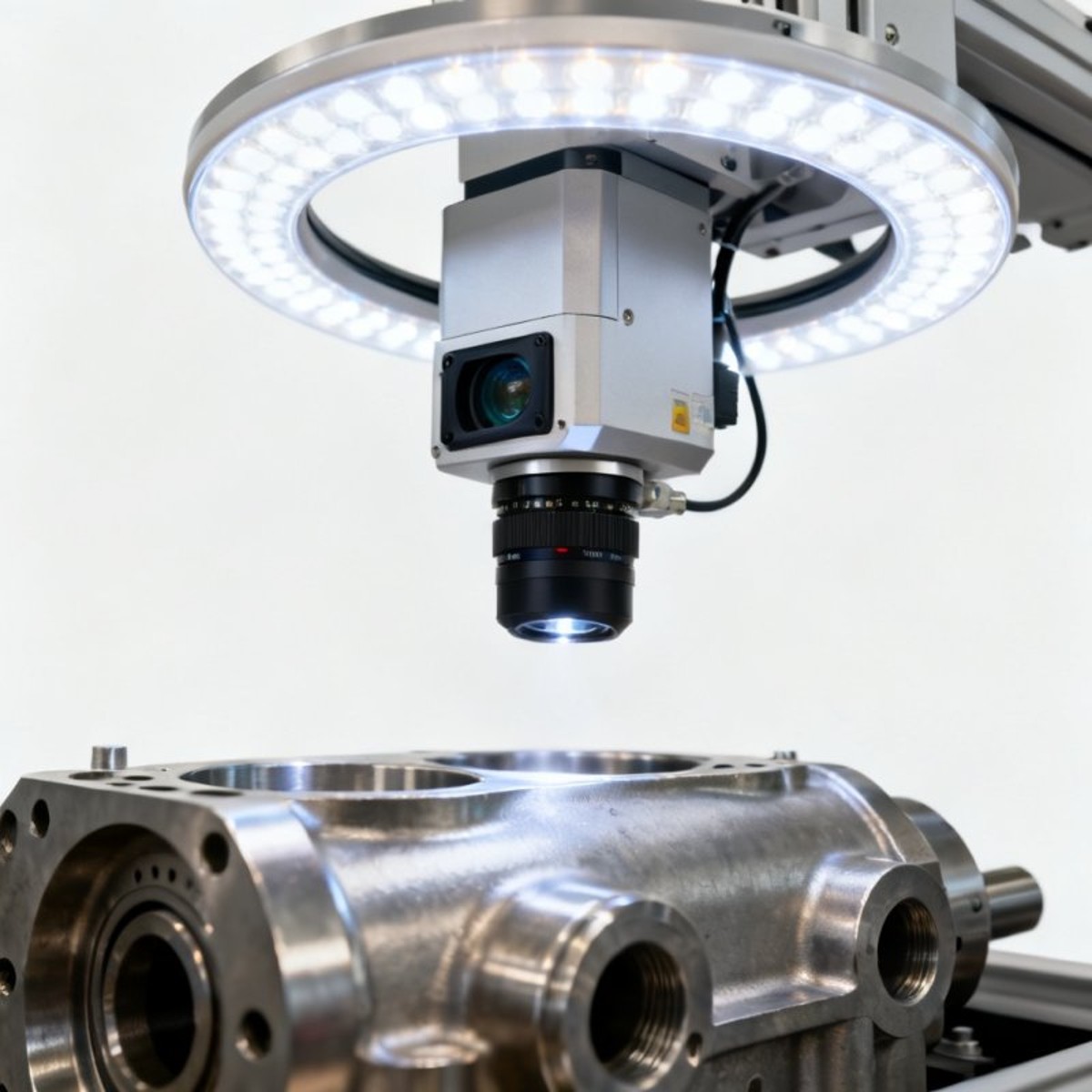

Machine vision is the use of cameras, optics, lighting, processing software, and decision logic to extract actionable information from images for industrial control. In precision manufacturing, that usually means measuring features in the micron to millimeter range, identifying missing or damaged parts, confirming assembly position, or sorting acceptable and nonconforming units at line speed.

A basic machine vision chain includes 5 elements: illumination, lens, image sensor, processing unit, and output interface. If any one of these is mismatched, even a high-resolution camera may fail to produce stable results. For example, a 12 MP camera paired with poor backlighting can miss edge contrast that a lower-resolution system with correct lighting would capture consistently.

Precision manufacturing depends on repeatability. A system that performs well for 2 hours in a lab but drifts after 3 production shifts is not suitable for factory deployment. That is why experienced teams evaluate machine vision not only by image quality, but also by cycle time, false reject rate, environmental tolerance, maintenance burden, and interface compatibility with PLC, MES, SCADA, or robotics platforms.

In high-value production lines, machine vision often supports 3 levels of control. The first is detection, such as presence or absence of a part. The second is measurement, such as hole diameter within ±0.02 mm. The third is feedback, where the system adjusts robot alignment, laser path, print calibration, or downstream sorting logic in real time.

Common inspection objectives

- Dimensional inspection for tolerances such as ±0.01 mm to ±0.10 mm, depending on optics, field of view, and calibration method.

- Surface inspection for scratches, pores, burn marks, contamination, and coating non-uniformity on metals, polymers, wafers, and composites.

- Positioning and guidance for robotic pick-and-place, welding alignment, and additive manufacturing layer monitoring.

- Identification tasks such as OCR, barcode, Data Matrix, and serialization for traceability and compliance workflows.

Why the basics matter for buyers and operators

Users and operators need systems that are stable and easy to maintain. Procurement teams need systems with verifiable specifications, spare parts visibility, and support continuity over 3–7 years. Quality teams need measurable pass/fail logic tied to acceptance criteria. When these perspectives are aligned early, deployment delays and rework can be reduced significantly.

Core Components and Performance Factors

Although machine vision is often discussed as software intelligence, hardware selection still determines most of the achievable performance. A reliable system requires matching sensor type, lens characteristics, lighting geometry, processing architecture, and enclosure design to the application. In many projects, the failure point is not algorithm quality but poor imaging conditions.

Resolution should be selected based on the smallest feature that must be detected. A practical rule is to allocate at least 3–5 pixels across the minimum defect width for detection, and often 10 pixels or more for precise metrology. If a defect is 0.05 mm wide, field of view and camera resolution must be calculated backward rather than guessed.

Lighting is equally critical. Bright field, dark field, diffuse dome, coaxial, and backlight setups each emphasize different defect modes. Surface scratches on polished metal may appear under low-angle dark field lighting, while outer profile measurement may require high-contrast backlighting. Inconsistent illumination can raise false reject rates from below 1% to more than 5% in demanding lines.

Processing architecture also shapes deployment. Edge-based smart cameras can simplify installation for single-station checks, while PC-based multi-camera systems are often better for high-throughput or AI-assisted inspection. In regulated or export-sensitive environments, data handling, software licensing, and remote access policies should be reviewed before the purchase order is issued.

Typical component choices by task

The table below summarizes common machine vision elements used in precision manufacturing and the trade-offs that matter during system specification.

For high-precision gauging, telecentric optics and stable backlighting are often more valuable than simply moving to a larger sensor. For variable products or textured surfaces, flexible lighting and configurable software recipes usually deliver better long-term value than a fixed, single-condition setup.

Minimum technical checks before approval

- Confirm required pixel resolution at the smallest critical feature, not at the total part size.

- Test at least 30–50 real production samples, including known defects and borderline parts.

- Measure repeatability across 2–3 shifts if the process is temperature- or vibration-sensitive.

- Verify communication support such as Ethernet/IP, PROFINET, Modbus TCP, or digital I/O.

Where Machine Vision Delivers Value Across Industrial Workflows

Machine vision supports more than end-of-line inspection. In advanced manufacturing environments, it can be placed before, during, and after production to reduce scrap earlier. Pre-process checks confirm raw part orientation and identity. In-process systems monitor alignment, bead shape, layer consistency, or tool position. Post-process stations verify dimensions, surface condition, and marking quality before shipment.

This broad applicability is why machine vision is increasingly evaluated alongside 3D printing, fiber lasers, and nanomaterial-based products. In additive manufacturing, vision can monitor powder spread consistency, layer registration, and printed code readability. In laser processing, it helps with seam tracking, weld inspection, and burn pattern control. In advanced materials production, it supports uniformity checks where manual inspection is slow or subjective.

For procurement and business evaluation teams, the value of machine vision is best expressed through operational metrics. These may include a 15%–40% reduction in manual inspection hours, improved first-pass yield, lower customer returns, and faster root-cause isolation. Actual results vary by process maturity, but the strongest projects link image data to corrective action rather than using vision only as a passive gate.

The right use case usually has 3 characteristics: repeatable inspection criteria, clear defect economics, and line conditions stable enough for calibration control. If defect definition is vague, if each part is highly unique, or if upstream variation is extreme, machine vision may still help, but solution design should include recipe management or AI-based classification rather than only rule-based logic.

Representative applications by process stage

The matrix below shows how machine vision is commonly positioned in precision manufacturing workflows and what each stage is expected to deliver.

A key takeaway is that machine vision delivers the highest value when integrated into the process loop. A final inspection camera can catch defects, but an in-process system can prevent them. For high-throughput lines, this difference has a direct impact on scrap cost, operator workload, and delivery reliability.

Typical environments where vision is prioritized

- High-volume assembly lines where cycle times are below 2 seconds per station.

- High-value components where each defect may trigger rework, warranty cost, or compliance review.

- Traceable industries requiring serialization, lot control, or image-based audit records for 1–5 years.

How to Specify and Procure a Machine Vision System

A successful purchase starts with a clear inspection specification, not a product brochure. Buyers should define the defect types, part variation range, cycle time, pass/fail logic, environmental constraints, and data output requirements before comparing vendors. Without this structure, quotations become difficult to compare because each supplier interprets the application differently.

For industrial sourcing, at least 4 evaluation layers should be reviewed: technical fit, validation evidence, lifecycle support, and compliance exposure. Technical fit includes optics, lighting, speed, and software capability. Validation evidence includes sample testing and gauge repeatability. Lifecycle support includes spare parts, remote diagnostics, and local service response. Compliance exposure includes data governance, export control sensitivity, and standards alignment.

Project teams should also define an acceptance plan. A common structure is a 3-stage process: factory acceptance test, site acceptance test, and production stability review after 2–4 weeks. This helps distinguish installation success from operational success. It also gives procurement and engineering teams a shared basis for release payments and performance confirmation.

Commercially, the lowest initial quotation may not be the lowest total cost. If recipe changes require vendor intervention, if lighting modules are proprietary, or if calibration takes 4 hours every week, operating cost rises quickly. In contrast, a slightly higher initial investment may pay back within 12–18 months if it reduces manual inspection labor and prevents recurring escapes.

Procurement checklist for cross-functional teams

The table below can be used by engineering, quality, and sourcing teams when comparing machine vision proposals for precision manufacturing lines.

This approach prevents misalignment between users, project managers, and commercial teams. It also creates a stronger basis for comparing domestic and international suppliers, especially when lead time, support geography, or regulatory review could affect project timing.

Recommended implementation sequence

- Define measurable inspection criteria and collect real samples.

- Run a feasibility test under line-like conditions, not only in a lab.

- Freeze interfaces, data outputs, and acceptance thresholds.

- Complete FAT and SAT with documented image records and exception handling.

- Review performance after the first 2–4 weeks of live production.

Common Risks, Misconceptions, and Practical FAQ

One common misconception is that more megapixels automatically mean better inspection. In reality, lens distortion, vibration, unstable lighting, and poor calibration can eliminate the theoretical benefit of higher resolution. Another misconception is that AI can solve any inspection problem. AI is powerful for variable surface defects, but it still depends on good image acquisition and a representative training dataset.

Another risk is ignoring environmental drift. Dust, heat, ambient light, coolant mist, or fixture wear can slowly degrade image quality over weeks. A system that begins at 98% classification consistency may drop if preventive checks are not planned. For this reason, maintenance teams should define inspection of optics, light intensity, enclosure cleanliness, and calibration reference parts at scheduled intervals such as weekly or monthly.

Data management should also be addressed early. If images are stored for traceability, teams must decide resolution, retention period, indexing method, and access permissions. Even a moderate line capturing 1 image per part can create large datasets over 6–12 months. Storage cost, review workflow, and cybersecurity policies should therefore be included in the deployment plan.

For global projects, decision-makers should not overlook supply-chain and regulatory visibility. Some components, software modules, or imaging technologies may be affected by export control review, regional service constraints, or country-specific import requirements. Technical benchmarking and compliance screening help reduce sourcing surprises during the project execution phase.

How accurate can machine vision be?

Accuracy depends on field of view, optics, lighting, calibration, fixturing, and the measurement method. In practical industrial setups, dimensional inspection may range from around ±0.10 mm for larger fields of view to ±0.01 mm or better for optimized small-area systems. Suppliers should state repeatability and test conditions, not just theoretical resolution.

How long does implementation usually take?

A straightforward single-station vision project can often move from feasibility to line use in 4–8 weeks. Multi-camera or highly customized systems may require 8–16 weeks, especially when mechanical fixtures, robot interfaces, or factory IT approvals are involved. Projects move faster when sample parts and acceptance criteria are available from day 1.

When should AI-based inspection be considered?

AI-based machine vision is often useful when defect appearance changes significantly, when surface texture is complex, or when rule-based thresholds generate too many false calls. However, teams should prepare labeled image datasets, validation rules, and retraining procedures. For many stable geometric inspections, conventional vision remains easier to validate and maintain.

What should operators be trained on?

Operators should understand 4 basics: daily startup checks, cleaning and handling of optics, alarm interpretation, and recipe selection for part variants. A short but structured training plan of 2–4 hours for operators and a deeper session for maintenance and engineers can prevent avoidable downtime and misclassification events.

Machine vision basics are not only a technical topic; they are a business-critical foundation for precision manufacturing quality, process control, and procurement confidence. When camera selection, lighting design, validation method, and compliance review are handled systematically, machine vision becomes a measurable asset rather than a trial-and-error project.

For organizations evaluating machine vision alongside additive manufacturing, laser processing, advanced materials, or other high-tech production investments, a structured benchmarking approach is essential. G-AIT supports this need by focusing on verified technical data, industrial standards, and decision-ready intelligence that helps buyers, engineers, and quality leaders compare technologies with greater clarity.

If you are planning a new inspection line, upgrading a precision manufacturing workflow, or reviewing suppliers for a regulated global project, now is the right time to align technical requirements with procurement and compliance priorities. Contact us to discuss your application, request a tailored evaluation framework, or learn more about machine vision and related advanced industrial solutions.

Related News

Related News

- 00

0000-00

International trade news updates that may change sourcing plans - 00

0000-00

What matters most in packaging machinery for pharmaceutical industry - 00

0000-00

Why market prices for construction materials keep shifting by region - 00

0000-00

When industrial machinery maintenance solutions reduce unplanned downtime - 00

0000-00

Business intelligence tools for manufacturing that reveal margin leaks

Dr. Victor Gear

Weekly Insights

Stay ahead with our curated technology reports delivered every Monday.