When Does AI Inspection Beat Manual QC?

In precision manufacturing, the tipping point between manual QC and AI inspection is no longer theoretical. As Machine Vision advances across 3D Printing, Metal 3D Printers, Additive Manufacturing, and Fiber Lasers, buyers and operators need clear benchmarks tied to Industrial Standards, Technical Specifications, and risk controls. This article examines when AI delivers measurable gains in speed, consistency, and traceability—and when human judgment still matters most.

When is AI inspection the better quality control choice?

AI inspection beats manual QC when defect detection must remain stable across long production runs, multiple operators, and complex geometries. In cross-industry environments, this usually appears when parts move from low-volume validation into medium or high-volume production, or when traceability must be maintained for 12–36 months under customer or regulatory review. For procurement teams and quality managers, the key question is not whether AI is modern, but whether it reduces escape risk, review time, and inconsistency at a measurable level.

Manual inspection still performs well in early-stage prototyping, rare defect discovery, and low-frequency checks where the defect library is incomplete. An experienced inspector can identify unusual cosmetic issues, assembly context, or process clues that a narrowly trained model may miss. However, once inspection criteria become repeatable and the takt time tightens to seconds or a few minutes per part, human-only QC often struggles with fatigue, shift-to-shift variation, and incomplete records.

Across machine vision and optical inspection programs reviewed by industrial buyers, the break-even point usually depends on 4 practical conditions: defect repeatability, image quality stability, throughput pressure, and documentation needs. If at least 3 of these 4 are already defined, AI inspection is often the stronger option. G-AIT helps decision-makers benchmark these conditions against ISO, ASTM, IEEE, and SEMI-aligned workflows so implementation is based on engineering evidence rather than vendor claims.

This matters across many sectors, not only electronics or automotive. Fiber laser processing requires fast verification of weld seams, cut edges, and heat-affected zones. Additive manufacturing needs layer-wise or post-build checks for porosity signals, dimensional drift, and surface irregularities. Vacuum engineering and nano-material production require inspection discipline where contamination, micro-defects, or subtle deviations can affect downstream performance. In these settings, AI inspection becomes valuable when consistency is more important than occasional subjective judgment.

Four signals that manual QC is approaching its limit

- Inspection criteria are documented, but two or more inspectors still classify the same defect differently during the same week or lot.

- Line speed requires decisions within 2–10 seconds, leaving little room for rechecking, supervisor review, or photo archiving.

- Customers require digital records, image-linked pass/fail history, or lot traceability for 12 months or longer.

- The cost of a false accept is much higher than the cost of an additional machine vision station, especially in Tier-1 or export-sensitive supply chains.

If these signals are present, AI inspection should be evaluated as a production control system rather than a simple camera upgrade. That distinction is important for business evaluators and project managers, because the return comes from reduced rework, lower dispute risk, and more stable outgoing quality—not only from labor replacement.

Manual QC vs AI inspection: what changes in speed, accuracy, and traceability?

The most useful comparison is not human versus machine in absolute terms, but task versus task. Some inspections are rule-based and image-rich. Others depend on context, engineering judgment, or root-cause reasoning. The table below gives a practical comparison that procurement personnel, operators, and quality leads can use during line planning or system upgrades.

The practical lesson is clear: AI inspection creates value when inspection is frequent, repeatable, and documentation-heavy. Manual QC remains essential when products change rapidly, defect definitions are immature, or process context matters as much as the image itself. Many successful factories use a 2-stage model: AI for first-pass screening and human review for exceptions, new defect types, and release decisions during the first 4–12 weeks of stabilization.

Where buyers often misread the comparison

A common procurement mistake is comparing headcount cost only. That ignores the cost of customer returns, quarantine hours, delayed shipments, and engineering time spent resolving quality claims. Another mistake is assuming that AI inspection automatically improves results without controlled optics, stable fixtures, and acceptable training data. In reality, camera resolution, lighting geometry, part presentation, and defect labeling discipline determine whether the system performs reliably.

G-AIT’s cross-sector benchmarking is valuable here because the same core decision logic appears across laser processing, 3D inspection, and advanced materials verification. The hardware may differ, but the deployment question remains consistent: can the target defect be captured repeatably, classified objectively, and linked to a business decision such as rework, scrap, release, or supplier feedback?

A practical threshold framework

Use AI inspection first when 5 conditions are present: stable fixturing, controlled lighting, at least one clear defect taxonomy, recurring inspections every shift or every lot, and a defined escalation path for uncertain results. If only 2 or fewer of these are available, manual QC or hybrid validation usually gives better short-term control.

Which applications benefit most from AI inspection across industries?

AI inspection is most effective in environments where the defect can be seen, measured, or inferred from repeatable image patterns. This includes 2D surface review, 3D geometry verification, thermal pattern analysis, and optical anomaly detection. The strongest candidates usually share 3 traits: repetitive part flow, costly defect escape, and a need to compare batches, suppliers, or machine settings over time.

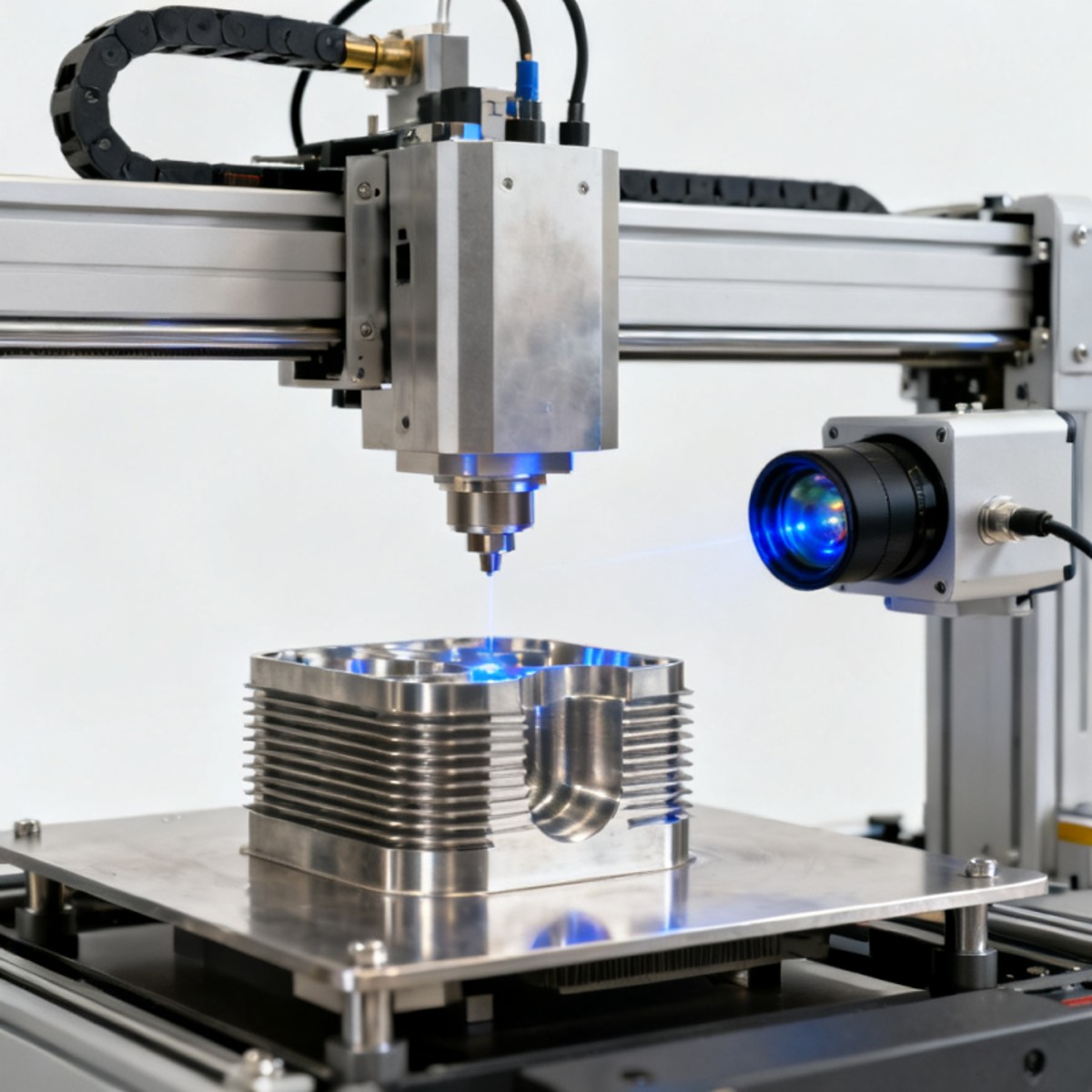

In additive manufacturing, AI inspection supports build verification at several points: powder bed observation, in-process monitoring, and post-build dimensional or surface checks. For metal 3D printers, inspection can help identify contour irregularities, unsupported features, residual powder retention, or dimensional deviation before machining or assembly. This is especially useful when each build contains multiple parts and rework windows are short.

In industrial laser processing, machine vision supports seam inspection, edge quality review, spatter pattern observation, and cut or weld consistency checks. Here, throughput pressure is often high, and defect characteristics can vary within narrow tolerances. A camera-based system linked to AI inspection can evaluate every part instead of relying on periodic sampling, which is valuable when process drift can occur within a single shift.

For vacuum, cryogenic, and advanced materials applications, not every quality issue is visible through standard optics. Yet AI inspection still helps in contamination screening, assembly verification, labeling, connector orientation, and documentation control. The lesson is important for project owners: AI is not limited to a single industry; it is best understood as a scalable inspection architecture with different sensor and rule combinations.

Application fit by inspection scenario

The next table helps technical buyers map application scenarios to the most realistic QC approach. It is particularly useful during feasibility review, capital approval, or distributor-level technical consultation.

This scenario-based view prevents overinvestment and under-specification at the same time. It also helps distributors and commercial teams frame realistic proposals. Instead of selling “AI inspection” as a generic concept, they can match the tool to throughput, defect type, and compliance burden.

Typical implementation path

- Define 3–5 defect classes and link each one to a production decision such as pass, rework, hold, or scrap.

- Collect image samples across at least 2–4 shifts, multiple operators, and normal process variation.

- Validate optics, lighting, and part positioning before model training, not after.

- Run parallel manual QC and AI inspection for an initial period, often 2–6 weeks depending on process stability.

- Set retraining rules, escalation thresholds, and audit retention before full release.

This phased method is one reason G-AIT is useful to enterprise buyers. The value is not simply system comparison; it is decision discipline across technical specification, compliance review, and supplier communication.

What should procurement and engineering teams check before investing?

Before buying an AI inspection system, teams should verify whether the business case is driven by labor pressure, defect escape cost, audit traceability, or process scalability. These drivers lead to different specifications. A line with frequent product changeovers may need flexible recipes and fast retraining. A stable, high-volume line may prioritize cycle time and false reject control. A regulated supply chain may value data retention, user permissions, and revision history over raw speed.

For quality control personnel and safety managers, the most important technical checks often fall into 5 groups: sensor suitability, lighting stability, defect taxonomy, system integration, and validation protocol. If one of these groups is weak, the apparent precision of AI inspection can create false confidence. A camera that sees too little or too much variation is not a reliable quality tool, regardless of software claims.

For procurement and commercial evaluators, timelines matter. A basic feasibility study may take 1–3 weeks if sample images and defect definitions already exist. A full deployment with fixtures, software tuning, MES or PLC integration, and operator training often requires 4–12 weeks. Complex multi-part programs or cross-site standardization may take longer, particularly when customer approval or export-control review is involved.

G-AIT supports this phase by connecting technical benchmarks with sourcing realities. Buyers do not only need camera and algorithm data. They also need to assess standards alignment, vendor response capability, data ownership, and whether the proposed inspection path matches actual production constraints across global sites.

Procurement checklist for AI inspection projects

- Confirm the inspection target: cosmetic defect, dimensional deviation, assembly error, thermal anomaly, or mixed criteria.

- Ask for the acceptable false reject and false accept ranges during pilot and after stabilization.

- Review data handling: image retention period, access control, traceability format, and system export capability.

- Check integration scope: standalone station, inline cell, robot-linked station, or enterprise quality platform.

- Require a clear service model covering commissioning, operator training, retraining support, and change management.

Standards and compliance considerations

Not every AI inspection project needs the same standard set, but most B2B buyers should evaluate fit against relevant ISO quality frameworks, ASTM methods where materials or additive manufacturing are involved, and SEMI or IEEE references where electronics, interfaces, or data handling apply. The goal is not to claim universal certification. The goal is to ensure the inspection logic, documentation flow, and acceptance criteria can survive customer audits and internal governance review.

This is particularly important for large enterprises managing multiple plants, distributors supporting local installation, and project managers coordinating between engineering, operations, and purchasing. AI inspection succeeds faster when the acceptance plan is defined before the equipment arrives.

Common misconceptions, risk controls, and final selection advice

One misconception is that AI inspection eliminates the need for human expertise. In reality, it shifts expertise toward image strategy, defect labeling, exception handling, and process feedback. Another misconception is that more data automatically means better accuracy. If labels are inconsistent or optics are unstable, larger data volumes can amplify noise rather than improve model quality. Good inspection programs are built on controlled inputs, not only on software complexity.

A second risk is underestimating maintenance. Lens contamination, lighting aging, fixture wear, and product revision changes can all affect detection quality over 3–12 months. Teams should define routine verification intervals, such as weekly image checks, monthly threshold review, and quarterly model performance review where needed. This is especially important in harsh industrial environments with dust, vibration, reflection, or thermal variation.

A third issue is organizational. If operators, quality engineers, and IT teams are not aligned, the system may produce valid results that nobody trusts or uses correctly. Clear ownership is essential. Who approves retraining? Who signs off on recipe changes? Who investigates false rejects? These governance questions often matter as much as camera resolution or neural network type.

The best final advice is simple: choose AI inspection when the defect is definable, the process is repetitive, and traceable evidence matters to customers or regulators. Keep manual QC in the loop when products are changing, defect knowledge is immature, or rare anomalies carry high safety or reputation risk. In many factories, the strongest answer is not AI instead of manual QC, but AI inspection designed to make human review more focused, faster, and better documented.

FAQ for buyers, operators, and quality teams

How do I know if my process has enough data for AI inspection?

You do not need unlimited data, but you do need representative data. Start with samples from multiple lots, at least 2–4 shifts if possible, and both good and defective parts under normal production variation. If defects are extremely rare, a hybrid approach with anomaly detection plus human confirmation may be more realistic than a fully classified model in the first phase.

Is AI inspection only suitable for large factories?

No. Small and mid-scale operations can also benefit when the cost of a defect escape is high or when trained inspectors are difficult to retain across shifts. What changes is system scope. A single-station AI inspection cell may be enough for one critical step, while large enterprises may deploy multi-line, multi-site systems with centralized analytics.

What is the safest way to transition from manual QC?

Use a staged rollout. Keep manual QC and AI inspection in parallel for 2–6 weeks, compare outcomes, refine thresholds, and document disagreements. This protects shipment quality while building trust in the system. It also gives engineering teams time to improve lighting, fixturing, and defect labels before full reliance on automation.

What should I ask a technical partner before requesting a quotation?

Ask about sensor type, image stability requirements, expected implementation stages, retraining support, integration with existing PLC or MES systems, data retention options, and acceptance criteria. If the application involves additive manufacturing, industrial lasers, advanced materials, or vacuum systems, also ask how the inspection logic will reflect the process-specific technical specifications and relevant standards.

Why work with G-AIT for AI inspection benchmarking and sourcing decisions?

G-AIT brings a practical advantage to companies evaluating AI inspection across complex industrial environments. Instead of viewing machine vision as an isolated tool, G-AIT benchmarks it alongside Industrial Laser Processing, 3D Printing and Additive Manufacturing, high-performance materials, and vacuum or cryogenic engineering requirements. That matters because real purchasing decisions rarely happen in a single technical silo.

For information researchers and business evaluators, G-AIT helps convert broad market claims into verifiable engineering criteria. For operators and quality teams, it supports decision-making on defect categories, inspection architecture, and implementation priorities. For procurement directors and enterprise leaders, it provides a more reliable path for comparing technical specifications, standards fit, project timelines, and commercial risk across multiple suppliers or technology routes.

You can consult G-AIT for parameter confirmation, AI inspection solution selection, feasibility review for 3D inspection or machine vision projects, expected delivery and implementation windows, standards and audit considerations, sample evaluation planning, and quotation alignment with real production needs. This is especially useful when your project spans several plants, multiple product families, or high-consequence quality requirements.

If you are deciding whether AI inspection should replace, support, or coexist with manual QC, the most effective next step is a structured review of your inspection targets, throughput range, traceability requirements, and acceptance criteria. With that foundation, G-AIT can help you narrow the right technical path, reduce sourcing uncertainty, and move from concept discussion to a defensible procurement and implementation plan.

- supply chain

- manufacturing

- precision manufacturing

- LED lighting

- cement

- distributors

- electronics

- Industrial Laser Processing

- 3D Printing

- Additive Manufacturing

- Machine Vision

- Optical Inspection

- Vacuum Engineering

- Cryogenic Engineering

- Fiber Lasers

- Metal 3D Printers

- Technical Specifications

- Industrial Standards

- supply chain

- manufacturing

- precision manufacturing

- LED lighting

- cement

- distributors

- electronics

- Industrial Laser Processing

- 3D Printing

- Additive Manufacturing

- Machine Vision

- Optical Inspection

- Vacuum Engineering

- Cryogenic Engineering

- Fiber Lasers

- Metal 3D Printers

- Technical Specifications

- Industrial Standards

Related News

Related News

- 00

0000-00

International trade news updates that may change sourcing plans - 00

0000-00

What matters most in packaging machinery for pharmaceutical industry - 00

0000-00

Why market prices for construction materials keep shifting by region - 00

0000-00

When industrial machinery maintenance solutions reduce unplanned downtime - 00

0000-00

Business intelligence tools for manufacturing that reveal margin leaks

Dr. Victor Gear

Weekly Insights

Stay ahead with our curated technology reports delivered every Monday.