Where laser cutting precision mm benchmarks often mislead

Many technical evaluators rely on laser cutting precision (mm) benchmarks as a quick filter, yet these figures often conceal more than they reveal.

Tolerance claims can shift with material type, thickness, edge quality criteria, thermal distortion, and inspection method.

This article explains where laser cutting precision (mm) benchmarks become misleading and how to judge real cutting performance with verifiable, application-specific evidence.

What do laser cutting precision (mm) benchmarks actually measure?

The first problem is definition.

Many brochures present laser cutting precision (mm) benchmarks as a single machine truth, but precision can mean several different things.

It may describe positioning accuracy, repeatability, kerf deviation, hole diameter error, contour accuracy, or part-to-part consistency.

Those values are related, but they are not interchangeable.

A system can show excellent axis repeatability while delivering weaker edge accuracy on reflective metals or thicker sheets.

That difference matters in general industry, where one cutting platform may process stainless steel, carbon steel, aluminum, copper alloys, plastics, and composite laminates.

Another issue is test geometry.

Straight-line cuts often look tighter than sharp corners, micro-holes, nested profiles, or heat-sensitive patterns.

If a benchmark comes from simple shapes, it may overstate real production accuracy.

The safest reading is this: laser cutting precision (mm) benchmarks are indicators, not guarantees.

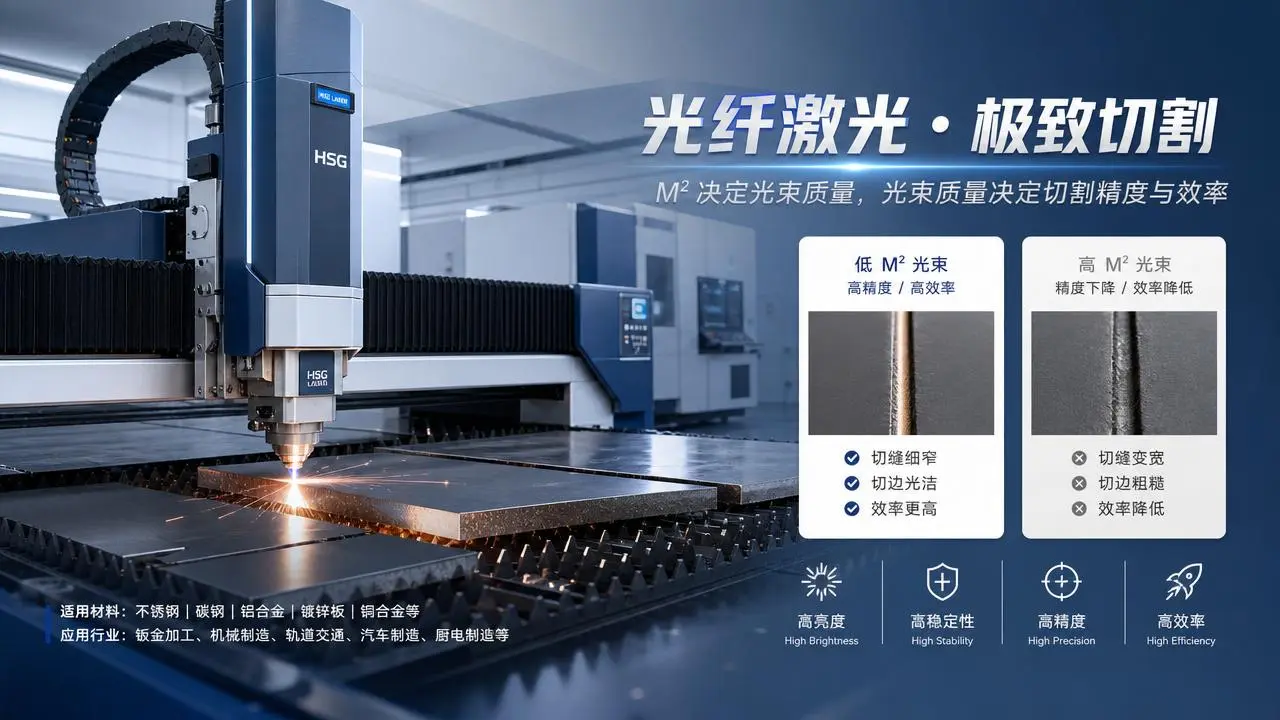

Why can published benchmark values be misleading across materials and thicknesses?

Material behavior changes the answer more than many data sheets admit.

Thin stainless steel can support very narrow tolerance bands.

Thicker carbon steel often behaves differently because melt ejection, heat input, and assist gas flow change cut dynamics.

Aluminum introduces another layer of complexity through reflectivity, thermal conductivity, and edge response.

A benchmark achieved on 1 mm steel does not automatically transfer to 8 mm aluminum.

Even within one material family, surface condition affects results.

Coatings, scale, oxide layers, film coverings, and flatness variation can alter focal stability and edge finish.

That means laser cutting precision (mm) benchmarks without material details are incomplete.

Thickness adds further distortion.

As section thickness rises, kerf shape can taper.

Top-edge and bottom-edge dimensions may no longer match closely.

A vendor may cite one favorable dimension while the application requires another.

For this reason, benchmark claims should always name:

- material grade

- sheet or tube thickness

- assist gas type and pressure

- laser power and focal setup

- part geometry and inspection method

Without those conditions, laser cutting precision (mm) benchmarks can create false confidence.

How do inspection methods and quality criteria change the benchmark result?

Measurement itself is a hidden variable.

Different teams may inspect the same part and report different numbers.

A caliper, optical comparator, CMM, vision system, or laser scanner will not always produce identical findings.

The reference edge also matters.

Some methods measure top-edge contour.

Others average the cut wall or inspect the functional fit after secondary handling.

If burr height, dross, taper, heat-affected zone, or edge perpendicularity are excluded, a precision claim can look stronger than the delivered part quality.

This is why laser cutting precision (mm) benchmarks should be paired with edge-quality rules.

In regulated or high-tech sectors, inspection traceability is essential.

Benchmark data should state whether testing followed ISO-aligned dimensional methods, internal protocols, or sample-based demonstration only.

A small benchmark number has little value if the test cannot be repeated under documented conditions.

When do process stability and production reality matter more than a headline tolerance?

A single successful sample is not the same as stable production.

In actual operations, accuracy changes with nozzle wear, lens contamination, gas purity, nesting density, sheet flatness, vibration, and thermal accumulation.

Those factors often decide whether laser cutting precision (mm) benchmarks hold through an entire shift.

Cycle strategy matters too.

A machine may hit a tight benchmark at reduced speed, special sequencing, or unusual gas consumption.

That may not be economical for routine production.

High accuracy should be evaluated together with throughput, scrap rate, maintenance intervals, and first-pass yield.

In broad industrial settings, the better question is not, “What is the smallest published tolerance?”

The better question is, “What tolerance remains stable at target speed, on target material, across target volume?”

That approach turns laser cutting precision (mm) benchmarks into something decision-useful.

How should cutting performance be compared more reliably?

Reliable comparison starts with application matching.

Benchmark data should mirror the real mix of parts, materials, and quality thresholds expected in service.

A useful evaluation package includes several test categories.

- dimensional accuracy on representative geometries

- repeatability over multiple parts and shifts

- edge quality, burr level, and taper consistency

- productivity at acceptable quality

- maintenance sensitivity and consumable stability

It is also smart to request benchmark data as ranges, not one best-case value.

Ranges reveal variability.

Variability usually predicts implementation risk better than a perfect sample part.

For complex supply chains, third-party validation can improve trust.

Independent technical repositories and benchmarking institutions help normalize test conditions and expose weak comparisons.

That is especially important when laser cutting precision (mm) benchmarks influence qualification, export documentation, or multi-site process transfer.

Quick comparison table for benchmark interpretation

What questions should be asked before trusting laser cutting precision (mm) benchmarks?

A disciplined checklist prevents costly interpretation errors.

Before accepting laser cutting precision (mm) benchmarks, ask these practical questions:

- Which material grade and thickness produced this value?

- Was the part geometry simple, mixed, or highly detailed?

- Is the number based on one part or a repeatability study?

- What speed and assist gas settings were used?

- How were taper, burr, and edge condition judged?

- Which metrology tool verified the result?

- Can the same result be maintained over a production run?

These questions shift attention from marketing precision to functional precision.

That distinction is vital wherever cut parts move into welding, sealing, optical alignment, electronics packaging, or high-value assemblies.

FAQ summary

Laser cutting precision (mm) benchmarks remain useful, but only when read in context.

A benchmark without material detail, geometry detail, and inspection detail is only a partial signal.

A stronger evaluation framework combines dimensional accuracy, edge quality, process stability, and documented test repeatability.

For technically demanding industrial decisions, benchmark evidence should be verified against standards, real production conditions, and independent engineering review.

Use laser cutting precision (mm) benchmarks as a starting point, then validate them through application-matched trials and traceable data before moving forward.

Related News

Related News

- 00

0000-00

What to verify before buying laser rust removal machine wholesale - 00

0000-00

Laser welding penetration depth data that changes process setup - 00

0000-00

Automated laser workstation OEM options with fewer delays - 00

0000-00

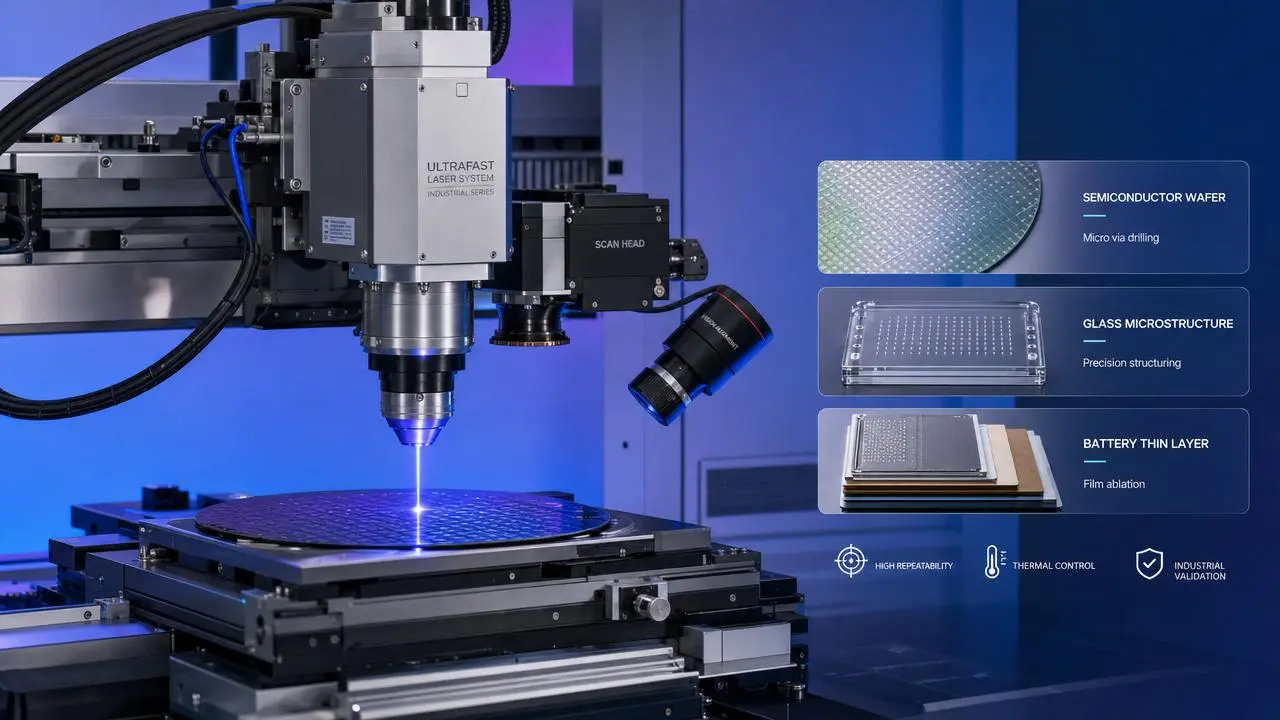

Ultrafast laser pulse duration benchmarks by real use case - 00

0000-00

Why R&D Institutes are revisiting ultrafast laser limits

Dr. Hideo Tanaka

Weekly Insights

Stay ahead with our curated technology reports delivered every Monday.