Ultrafast laser pulse duration benchmarks by real use case

For project managers and engineering leads evaluating speed, quality, and process stability, ultrafast laser pulse duration benchmarks are more than lab metrics—they are decision tools tied to real production outcomes. This article maps pulse duration performance to actual use cases, helping teams compare precision, heat impact, material response, and throughput with greater confidence before selecting systems for industrial deployment.

The core search intent behind ultrafast laser pulse duration benchmarks is practical comparison. Readers are not looking for theory alone. They want to know which pulse duration range performs better for cutting, drilling, marking, structuring, or processing sensitive materials.

For this audience, the most important concerns are predictable quality, thermal damage risk, throughput, cost justification, integration complexity, and whether a benchmark from a lab can translate into stable industrial output. They also need a way to compare vendors without relying on marketing claims.

The most useful content therefore is use-case-based benchmarking. That means linking femtosecond, picosecond, and adjacent ultrafast regimes to edge quality, recast layer, microcracking, debris, feature size, absorption behavior, and achievable cycle time across real materials.

This article focuses on those decision factors first. It gives less space to generic laser history and more space to benchmark logic, selection criteria, trade-offs, and scenario-based recommendations relevant to industrial deployment planning.

Why pulse duration benchmarks matter more than headline power ratings

In procurement reviews, laser power is often the first visible specification. Yet in ultrafast processing, pulse duration frequently has greater influence on process quality, thermal load, and final feature integrity than raw average power alone.

Shorter pulses reduce the time available for heat diffusion into surrounding material. In practical terms, this means less heat affected zone, cleaner ablation, lower burr formation, and better dimensional control for many precision tasks.

That does not mean the shortest pulse always wins. Project managers must judge pulse duration against target throughput, material class, required tolerance, and acceptable scrap rate. The best benchmark is application-specific, not absolute.

When buyers compare ultrafast laser pulse duration benchmarks correctly, they move from a specification mindset to an outcome mindset. The question becomes less about “what is the shortest pulse” and more about “what pulse range delivers the best production result.”

A practical benchmark framework: femtosecond vs picosecond by use case

For real purchasing and process planning, pulse duration benchmarks are most useful when grouped into broad operational bands. In most industrial discussions, teams compare femtosecond systems with picosecond systems for precision manufacturing tasks.

Femtosecond lasers generally offer the highest precision and the lowest thermal influence. They are often preferred when processing brittle, transparent, layered, or highly heat-sensitive materials where microcracks or delamination are unacceptable.

Picosecond lasers remain ultrafast and still deliver excellent quality. In many cases, they provide a more economical balance between precision and throughput, especially where production volume and equipment robustness matter as much as ultimate feature refinement.

From a benchmarking standpoint, femtosecond systems often lead in edge cleanliness, microfeature fidelity, and reduced collateral damage. Picosecond systems often compete strongly on process window width, cost effectiveness, maintenance practicality, and line integration.

That broad pattern helps, but it is still incomplete. Procurement decisions improve significantly when those pulse duration classes are tied to specific manufacturing outcomes rather than discussed as abstract laser categories.

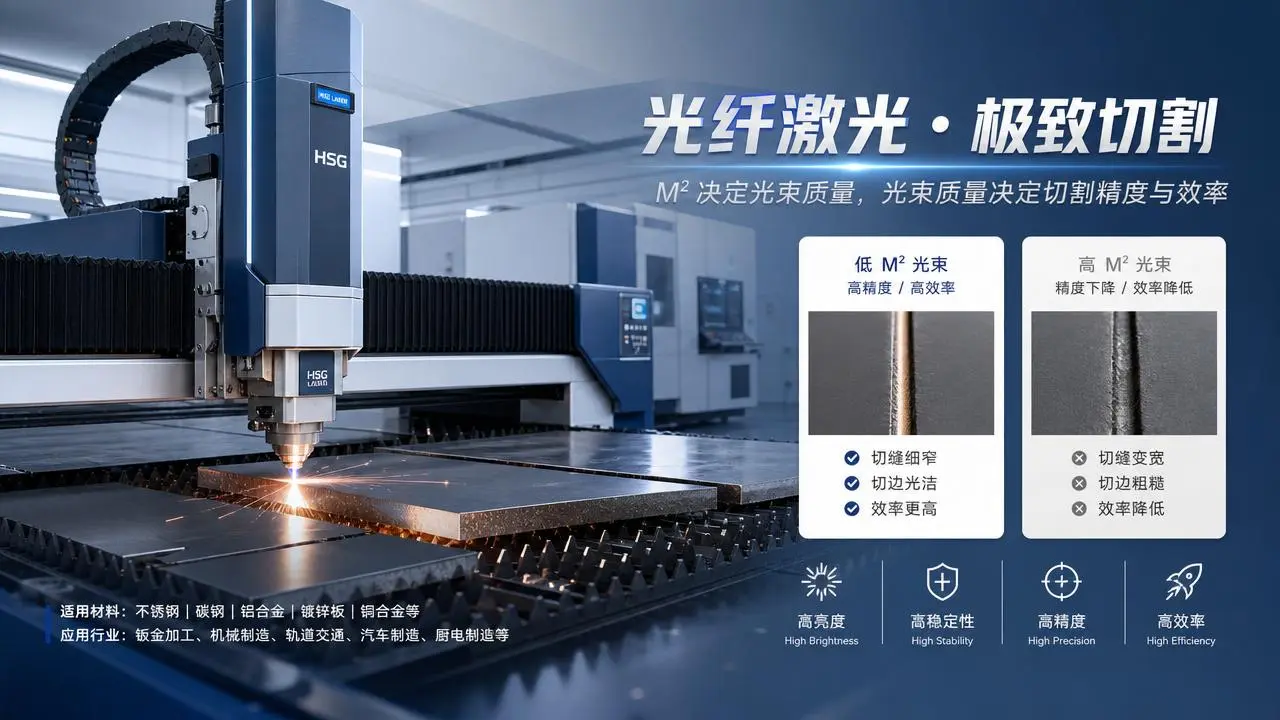

Benchmarks for micromachining metals: where quality starts to outweigh speed

In stainless steel, nickel alloys, copper, aluminum, and specialty metals, ultrafast pulse duration affects taper, burr formation, recast, and oxidation. These quality metrics often determine whether downstream polishing or cleaning is required.

For high-value microdrilling and thin-foil cutting, femtosecond pulses usually produce superior edge definition and lower recast. This is especially important in medical devices, fuel injector components, precision screens, and fine electrical interconnect structures.

However, picosecond systems may be preferred in production environments where slightly higher thermal impact is acceptable but capacity utilization, tool uptime, and capital efficiency carry more weight in the project business case.

Engineering teams should benchmark not only cut quality but also process consistency over long runs. A system that produces marginally cleaner features in short tests may underperform commercially if alignment sensitivity or maintenance frequency reduces actual throughput.

For metal micromachining, the most useful benchmark set includes feature accuracy, recast thickness, burr height, hole roundness, taper angle, scrap rate, and effective parts per hour. Pulse duration must be judged against all of them together.

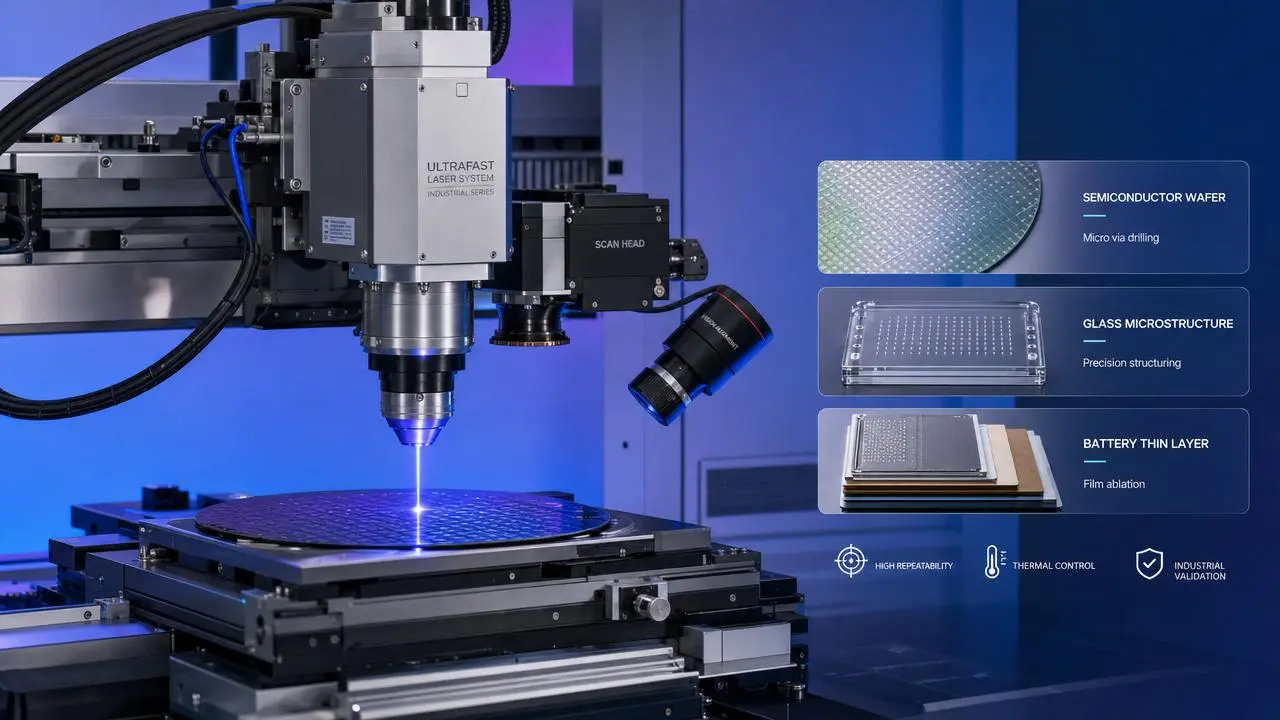

Benchmarks for glass and transparent materials: pulse duration becomes a risk control tool

Glass, sapphire, fused silica, and other transparent substrates create a different benchmarking challenge. Here, pulse duration directly influences crack initiation, chipping, edge strength, and whether internal modification can be performed cleanly.

Femtosecond lasers are often the benchmark leader for transparent materials because they support highly localized energy deposition with minimal surrounding damage. That makes them attractive for display glass, microfluidics, photonics, and semiconductor packaging workflows.

For brittle materials, the cost of poor pulse duration selection is not only cosmetic. It can create latent defects that survive inspection but later fail in assembly or field use. Project managers should therefore treat pulse duration as a reliability factor.

Benchmark trials in glass should include sidewall roughness, crack density, chipping at entry and exit, edge strength after processing, and yield after subsequent bonding or coating steps. This is where real use-case testing is essential.

If a vendor presents only ablation rate or cut speed data, the benchmark is incomplete. In transparent material applications, hidden damage and post-process breakage may matter more than initial processing speed.

Benchmarks for polymers, composites, and flexible electronics

In polymers and multilayer films, excessive thermal load can cause melting, carbonization, delamination, or poor edge sealing. Pulse duration benchmarks help determine whether the process will be production-safe for sensitive web or sheet materials.

Femtosecond systems usually deliver the strongest results when edges must remain clean without melt residue, particularly in medical films, battery separators, microfilters, and flexible circuitry. Their advantage is most visible in heat-sensitive stack-ups.

Picosecond systems can still perform very well, especially when the material is less thermally fragile or when throughput priorities justify a broader compromise. The key is to test the actual laminate or polymer grade, not a generic substitute.

For composites, pulse duration also influences matrix damage and fiber exposure quality. Carbon fiber reinforced polymers and specialty laminates require benchmark data that includes mechanical integrity after processing, not just visual edge appearance.

Project leaders should ask for process benchmarks under real tension, fixturing, and contamination conditions. Lab-perfect polymer cuts do not guarantee stable output in roll-to-roll, high-mix, or contamination-prone production settings.

Benchmarks for semiconductor, electronics, and precision structuring tasks

Wafer dicing, thin-film patterning, via drilling, resistor trimming, and fine surface structuring all place extreme importance on pulse duration. In these environments, a small thermal difference can affect electrical behavior and yield.

Femtosecond pulse benchmarks often stand out where substrate damage, debris control, and micron-level fidelity are critical. They are especially valuable when devices have tightly packed architectures or multiple fragile material layers.

Picosecond systems remain highly relevant in electronics manufacturing because they can offer a strong combination of speed and acceptable quality for many patterning, marking, and selective removal operations where process margins are wider.

For benchmarking in electronics, useful metrics include kerf width, debris redeposition, dielectric damage, conductive layer continuity, electrical test pass rate, and post-process cleaning burden. These indicators show whether laser quality supports manufacturing economics.

This is one of the clearest examples of why ultrafast laser pulse duration benchmarks must be tied to the final product function. A visually neat feature is not enough if it lowers electrical reliability.

How to compare throughput honestly without falling for misleading benchmark data

One of the most common buying mistakes is treating pulse duration as if it can be benchmarked independently from repetition rate, pulse energy, beam quality, wavelength, scanning architecture, and software strategy. It cannot.

A vendor may show impressive throughput at a given pulse duration, but the result may depend on a specific optics stack, a narrow material thickness, or a quality threshold below your production requirement. Benchmark context always matters.

Project managers should ask whether stated throughput refers to gross scan speed, net completed parts, or accepted yield after inspection. These numbers can differ substantially, especially in delicate applications where quality losses erase theoretical speed gains.

A strong benchmark comparison uses a common material, common geometry, common acceptance criteria, and common measurement methods. Without those controls, pulse duration data can be technically correct but commercially misleading.

In many industrial deployments, the winning system is not the one with the highest instantaneous ablation rate. It is the one with the best stable throughput after accounting for rejects, cleaning, post-processing, and downtime.

What project managers should ask vendors during benchmark reviews

To make ultrafast laser pulse duration benchmarks actionable, buyers need structured questions. Start by asking which pulse duration range the vendor recommends for your exact material, thickness, geometry, and required inspection threshold.

Next, ask for benchmark evidence under production-like conditions. That includes fixture method, environmental controls, number of parts tested, process drift over time, and whether results were produced by application engineers or under customer witness conditions.

Ask vendors to separate best-case and guaranteed-case performance. Many systems can produce excellent samples in expert hands. The more relevant benchmark for capital investment is what can be repeated by your operating team within a realistic ramp-up period.

It is also important to request downstream impact data. Did the pulse duration choice reduce cleaning, improve bonding, raise pass rates, or eliminate a finishing step? These business outcomes matter more than isolated process visuals.

Finally, compare serviceability and integration maturity. A technically superior pulse duration benchmark may lose value if spare parts lead times, process recipe transfer, or automation compatibility create deployment risk.

Selection guidance by real use case

If the job involves brittle glass, high-value medical parts, transparent substrates, or sub-micron precision where thermal damage is unacceptable, femtosecond benchmarks often justify the premium. Quality and yield protection usually drive the decision.

If the use case involves broader industrial micromachining, selective thin-film removal, electronics processing with moderate tolerances, or scaled production where cost and uptime matter heavily, picosecond benchmarks may offer the better overall business case.

When tolerances are tight but not extreme, the decision often turns on whether secondary finishing can be eliminated. If a shorter pulse duration removes polishing, cleaning, or defect sorting, total process economics may improve significantly.

Conversely, if your acceptance window is wider and post-processing is already built into the line, the higher capital cost of the shortest pulse duration may not produce a proportional operational return. This is why benchmark design must reflect workflow reality.

The best practice is to score pulse duration options against five factors: quality target, throughput target, material sensitivity, process stability, and total cost of ownership. That framework is more reliable than comparing pulse duration alone.

Conclusion: benchmark pulse duration against outcomes, not marketing labels

Ultrafast laser pulse duration benchmarks are most valuable when they are tied to real use cases and measurable industrial outcomes. For project managers, the right question is not simply which pulse is shorter, but which pulse duration delivers the best production result.

Across metals, glass, polymers, composites, and electronics, pulse duration shapes heat impact, feature fidelity, yield, and downstream process burden. Femtosecond systems often lead on ultimate precision, while picosecond systems often offer compelling practical balance.

The smartest selection process compares both under common acceptance criteria, realistic workflow conditions, and full-cost evaluation. When benchmarked this way, pulse duration becomes a powerful decision variable rather than a confusing technical specification.

For teams planning industrial deployment, that shift in perspective reduces risk, improves vendor comparison, and increases the odds that the chosen ultrafast laser platform will perform not only in a demo, but in sustained production.

Related News

Related News

- 00

0000-00

What to verify before buying laser rust removal machine wholesale - 00

0000-00

Laser welding penetration depth data that changes process setup - 00

0000-00

Automated laser workstation OEM options with fewer delays - 00

0000-00

Ultrafast laser pulse duration benchmarks by real use case - 00

0000-00

Why R&D Institutes are revisiting ultrafast laser limits

Dr. Hideo Tanaka

Weekly Insights

Stay ahead with our curated technology reports delivered every Monday.